AI Governance: Where It Breaks

Most organizations believe they have AI governance under control because the controls they trust—identity, network boundaries, and data access—are in place and working as designed. In reality, the controls applied generally do not fully align with how AI systems behave.

Retrieval Is Not Data Access; It Is Context Injection

In a traditional system, a query retrieves data from a database within a defined boundary. Access is explicit, scoped, and governed through identity and query constraints. In AI systems, retrieval (RAG) introduces a different behavior. Context is selected based on similarity rather than explicit access paths, and that context is injected directly into the model’s input.

This concern is well documented. Industry guidance consistently identifies retrieval as a distinct risk surface. The OWASP Top 10 for LLM Applications highlights sensitive information disclosure through retrieval pipelines, particularly when vector stores are not properly segmented or filtered. The NIST AI Risk Management Framework similarly notes that AI systems may expose or infer sensitive information through outputs or intermediate steps, even when direct data access is controlled. Once retrieved, that information becomes part of the model’s working context. It can be transformed, combined, and reflected in outputs. At that point, the boundary is no longer enforced by the system itself.

Embeddings Persist Meaning Outside Traditional Controls

In traditional systems, data sensitivity is tied to stored records and access controls around them. AI systems introduce a parallel layer. Embeddings capture structure and relationships within data and make that information available for retrieval and inference. They are not copies of the original data, but they preserve structure and relationships that can result in unintended data exposure.

This behavior is documented in both guidance and research. NIST notes that AI systems can encode sensitive attributes in derived representations that are not visible through standard classification methods. Research on model inversion and embedding leakage has demonstrated that latent representations can expose attributes of underlying data even when raw records are not directly accessible.

This creates a persistence layer for meaning that is rarely governed with the same rigor as source data. Once created, embeddings can be reused, queried, and combined in ways that extend beyond the original data boundaries.

Routing Breaks the Assumption of a Fixed Execution Path

Traditional systems rely on deterministic execution paths that allow governance of where processing occurs and which systems are involved.

AI systems do not consistently operate that way. Routing decisions can determine which model processes a request at runtime, and those decisions may vary based on configuration, fallback logic, or system conditions. This introduces variability in execution location, provider, and processing behavior.

External guidance reflects this shift. The Cloud Security Alliance highlights dynamic model selection and external service invocation as a core risk area, particularly when organizations lack visibility into where inference is executed. The EU AI Act reinforces this by requiring traceability and transparency around model usage and processing context, recognizing that execution may shift across providers or regions. If execution paths are not fixed, then governance cannot rely on assumptions about where processing occurs.

Behavior Is Determined at Runtime, Not Design Time

The most significant change is not any single component. It is that system behavior is no longer fully defined by architecture alone. In AI systems, behavior depends on retrieved context, prompt composition, model responses, and routing decisions. These factors interact at runtime, and the resulting execution path is not always predictable from design artifacts.

This is a documented characteristic of these systems. The NIST AI Risk Management Framework describes AI systems as probabilistic and context-dependent, while the UK National Cyber Security Centre notes that LLM-based systems introduce non-deterministic behavior and emergent properties.

Traditional governance assumes that behavior can be understood from system design and enforced through static controls. That assumption does not hold in this model.

Why This Breaks Governance

Identity, network security, and data access policies remain necessary and effective within their intended scope. The failure is in the gap those controls do not address. Governance assumes a system where execution is linear and deterministic. AI systems operate as multi-step, runtime-driven pipelines where behavior is influenced by context and system state.As a result, controls are enforced at layers that no longer define system behavior, while the layers that do—retrieval, composition, routing, and runtime execution—remain insufficiently governed. This is why organizations encounter issues that appear inconsistent or difficult to explain: unintended exposure of information, variability across environments, and gaps in traceability. These are not isolated failures. They are outcomes of a misalignment between governance models and system behavior.

What This Implies for Governance

If governance is to remain effective, it must be extended to the surfaces that actually define system behavior. Retrieval must be treated as a controlled operation with explicit scope and boundaries. Embeddings must be recognized as derived artifacts that carry forward information from source data. Routing must be observable and constrained so that execution paths are understood and controlled. Runtime behavior must be captured as evidence rather than inferred from intended design.

These are not new principles. They are extensions of existing governance models applied to new system behaviors.

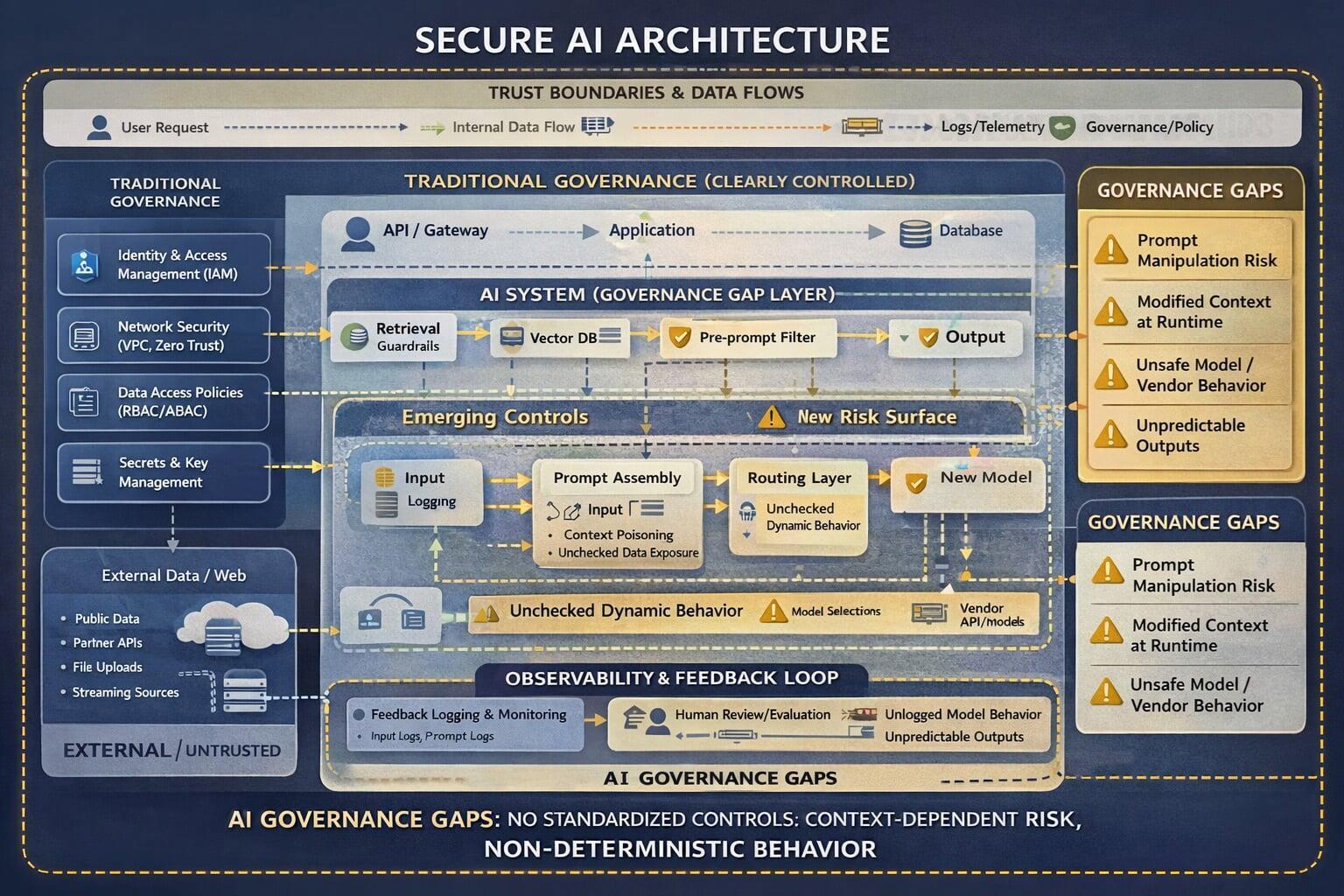

Representation of the Gap

The diagram below is a simplified representation of the gap between traditional governance and AI system behaviors. Most organizations are securing the system they think they have, where execution is linear and boundaries are implicit. But AI systems are multi-step, context-driven, and influenced by runtime conditions.

Governance does not need to be reinvented. It needs to be applied to the system that actually exists.